United Kingdom:Pro-Innovation, Industry-Led

The UK has charted a distinct path — principles-based, sector-specific, and innovation-first. No single AI Act. Instead, existing regulators apply five cross-sectoral principles while industry drives standards and governance.

UK’s Distinct Approach

3rd Largest AI Sector Globally, Largest in Europe

The UK’s AI sector contributed £12 billion in gross value added in 2024 and could reach £20–90 billion by 2030. With over £100 billion in private investment since mid-2024, the UK is a global AI powerhouse.

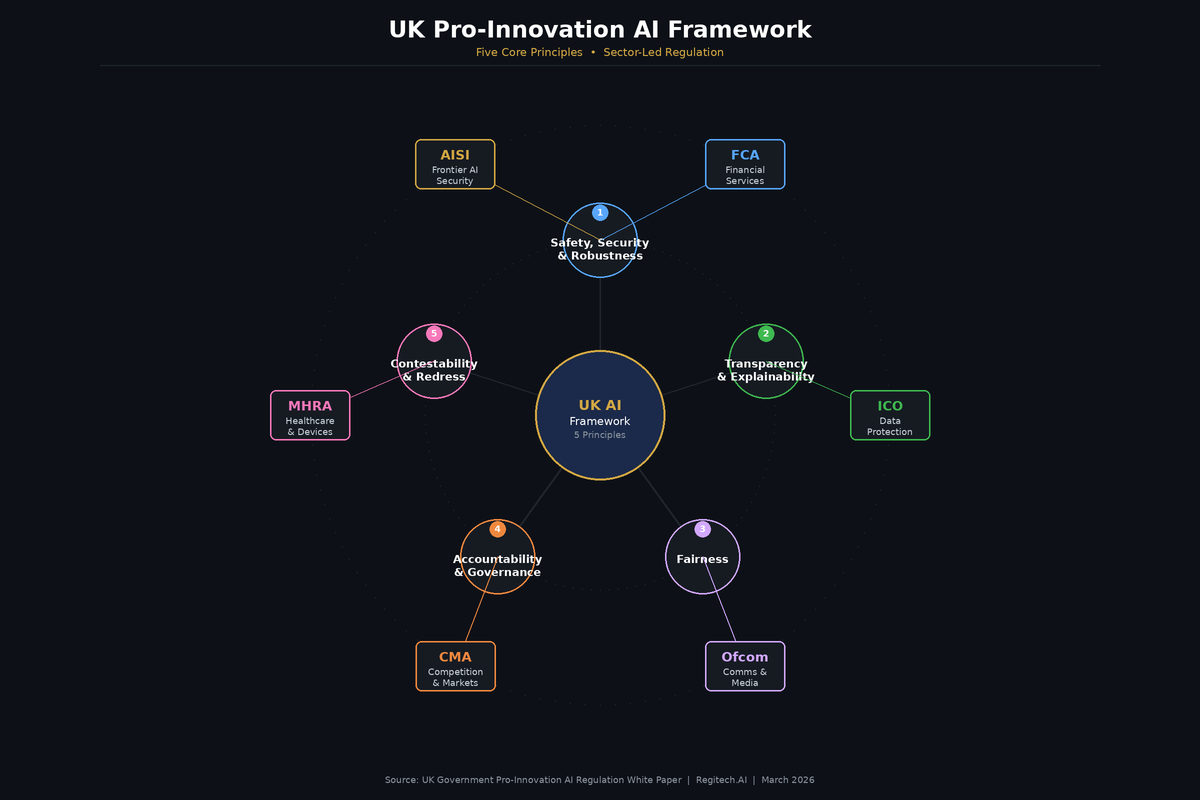

Five Cross-Sectoral AI Principles

Rather than a single prescriptive AI Act, the UK government established five principles that existing sector regulators apply within their respective domains. This approach provides flexibility while ensuring consistent governance foundations.

Safety & Robustness

Transparency

Fairness

Accountability

Contestability

Data (Use and Access) Act 2025 & Copyright Impact Assessment

The UK’s first legislative step toward AI-specific governance addresses the intersection of copyright, creative industries, and AI development — a uniquely UK priority.

March 2026: Copyright & AI Impact Assessment Published

Published pursuant to Section 135 of the Data (Use and Access) Act 2025 — the government’s evidence-first approach to AI copyright policy

Four Policy Options Under Consultation

Key Provisions & Timeline

- Section 135: Economic impact assessment across all policy options, with focus on SME impacts

- Section 136: Report on copyright works in AI development, including transparency and enforcement proposals

- Transparency: Expert working groups exploring training data summaries, crawler disclosures, and metadata standards

- Extraterritoriality: Assessment addresses AI systems developed outside the UK

- No preferred option yet: “We will not introduce reforms until confident they meet objectives”

What This Means for AI Governance

The UK’s evidence-first approach means policy is still forming. Organizations operating in the UK market should prepare for transparency requirements across all likely outcomes, while building governance infrastructure flexible enough to accommodate whichever policy option is adopted.

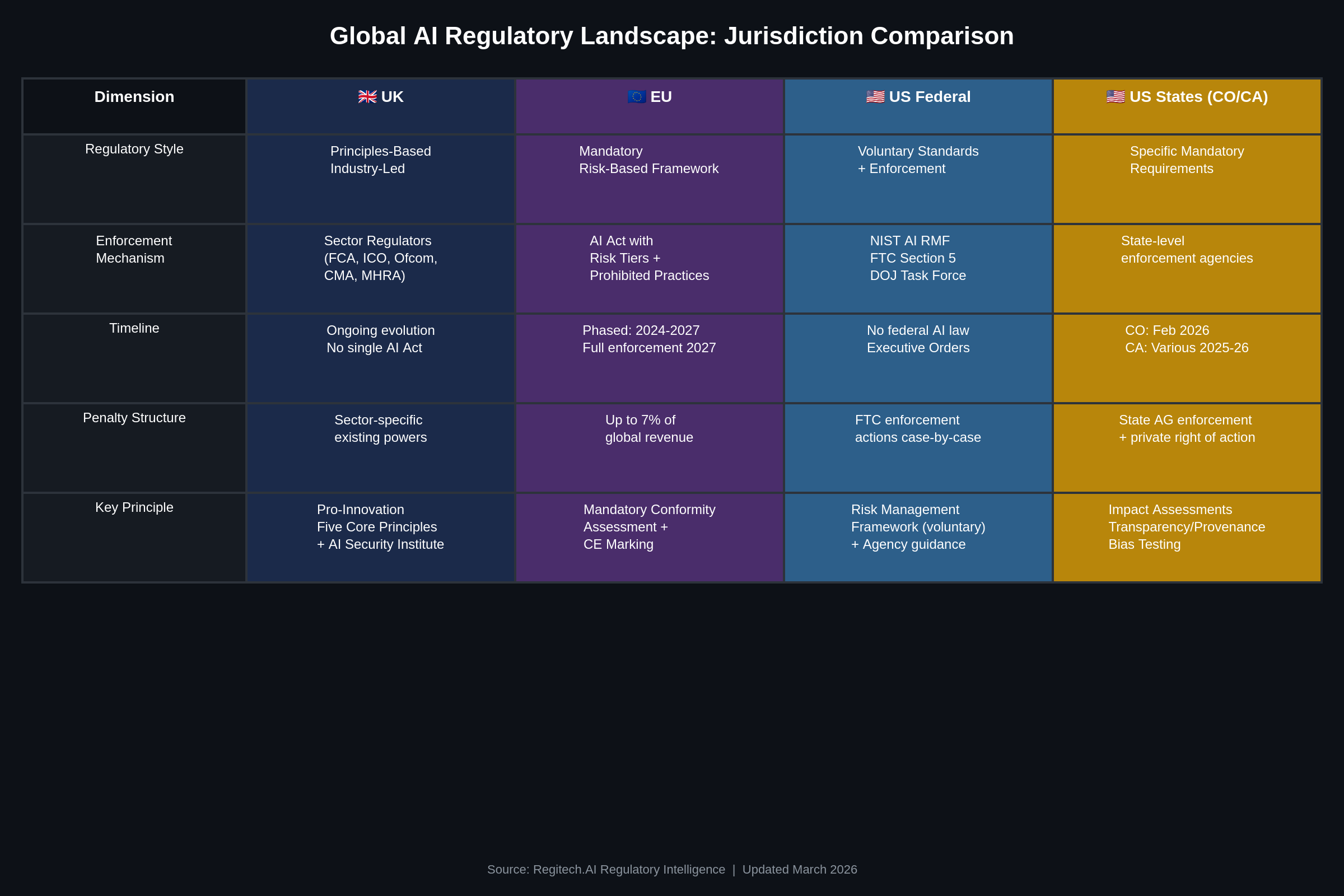

Five Jurisdictions, Five Approaches, One Business Reality

Companies operating across the UK, EU, and US face five distinct regulatory philosophies simultaneously. The UK’s principles-based approach creates different compliance dynamics than the EU’s mandatory framework or US state-level enforcement.

Where UK Converges with Other Approaches

Where UK Diverges

UK AI Strategy: Investment, Infrastructure & International Leadership

The UK government’s AI Opportunities Action Plan, AI Impact Summit participation, and substantial infrastructure investments signal a long-term commitment to becoming an AI superpower.

AI Impact Summit 2026

UK championed how AI can “supercharge growth, unlock new jobs, and improve public services” at the AI Impact Summit in India. Led by Deputy PM and AI Minister, the UK emphasized responsible adoption and fair safety standards across the Global South.

AI Opportunities Action Plan

50-point strategic roadmap with 38 commitments met by January 2026. Includes AI Growth Zones in five UK locations, £1.5B for national supercomputing, National Data Library backed by £100M+, and goal to upskill 10 million workers by 2030.

AI Security Institute

Rebranded from AI Safety Institute in February 2025 to focus on national security and misuse risks. Tests frontier AI models, develops safety protocols, and conducts alignment research. DSIT intends to establish it as a statutory body with regulatory certainty.

Key Government Investments

Signaling long-term commitment to AI infrastructure and governance capability

Sector Regulator Framework: Context-Specific AI Governance

Each UK sector regulator applies the five core principles within their domain. This creates a compliance landscape that requires sector-specific expertise — a unified governance platform must speak the language of each regulator.

FCA & PRA

Financial Services

- • Consumer Duty: AI must deliver good outcomes

- • Model risk management for lending/insurance

- • Explainability for adverse financial decisions

- • Treating Customers Fairly across AI lifecycle

ICO

Data Protection

- • UK GDPR compliance for AI data processing

- • AI auditing framework for automated decisions

- • Algorithmic transparency requirements

- • Data (Use and Access) Act 2025 provisions

MHRA

Healthcare & Life Sciences

- • AI/ML medical device certification guidance

- • NHSX AI ethics framework for NHS deployments

- • CQC compliance for private healthcare AI

- • NICE guidelines for clinical decision support

Ofcom

Communications & Broadcasting

- • AI-generated content transparency

- • Online Safety Act implications for AI

- • Deepfake detection and labeling

- • AI in content moderation standards

CMA

Competition & Consumer Protection

- • AI market competition analysis

- • Foundation model market review

- • Consumer protection in AI marketplaces

- • Digital Markets, Competition & Consumers Act

AI Security Institute

Frontier AI Safety

- • Frontier model evaluation and testing

- • Loss-of-control risk mitigation

- • Human-in-the-loop protocol design

- • International safety standard alignment

Why UK Organizations Choose Jurisdiction-Neutral Governance

The UK’s evolving framework — from principles-based guidance toward potential statutory requirements — means governance infrastructure built today must be flexible enough to accommodate any policy outcome.

Organizations That Build Now

The Risk of Waiting

Governance Infrastructure That Meets the UK Where It’s Going

Whether the UK adopts stronger copyright protections, broader TDM exceptions, or a comprehensive AI Bill, jurisdiction-neutral governance records position your organization for any outcome. Regitech’s four-layer architecture delivers defensible provenance, multi-agent monitoring, and integrated dispute resolution that satisfies UK sector regulators and international frameworks simultaneously.