The AI Governance Landscape: Phased Enforcement in an Era of Expanding Scope

EU high-risk deadlines extended to December 2027, US state enforcement beginning June 2026, and the UK charting a principles-based path with its Copyright & AI Impact Assessment. Five jurisdictions, five approaches — one business reality.

EU AI Act Omnibus Amendments: What Changed on March 16, 2026

The European Parliament’s joint IMCO-LIBE committee released Final Compromise Amendments to Regulation 2024/1689 — the most significant revision since the Act’s adoption. Seven Compromise Amendments recalibrate enforcement timelines, expand scope to 12+ product safety directives, and introduce new prohibited practices.

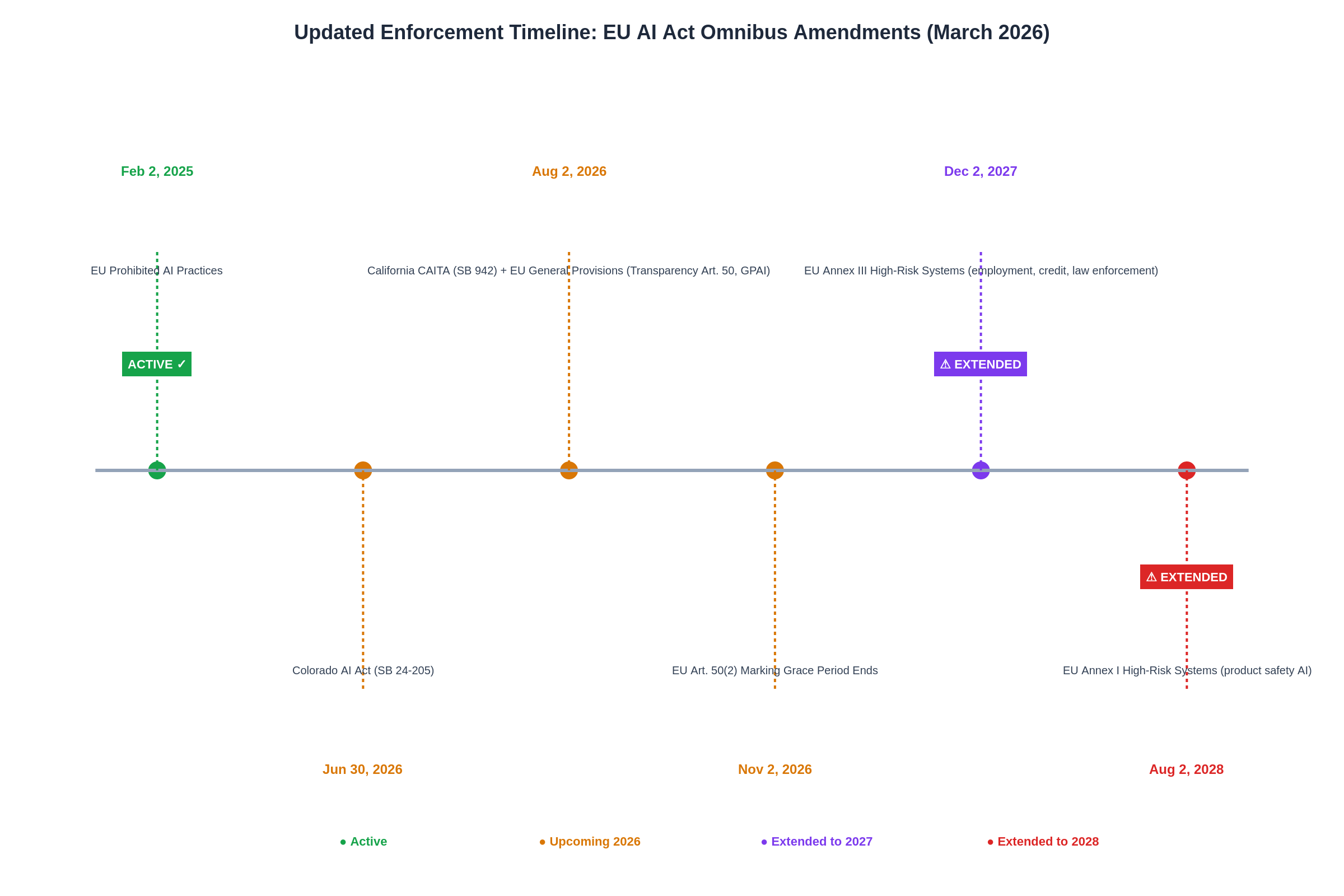

High-Risk Enforcement Postponed

Annex III systems (employment, credit, law enforcement) moved from Aug 2026 to Dec 2, 2027. Annex I product-safety AI to Aug 2, 2028.

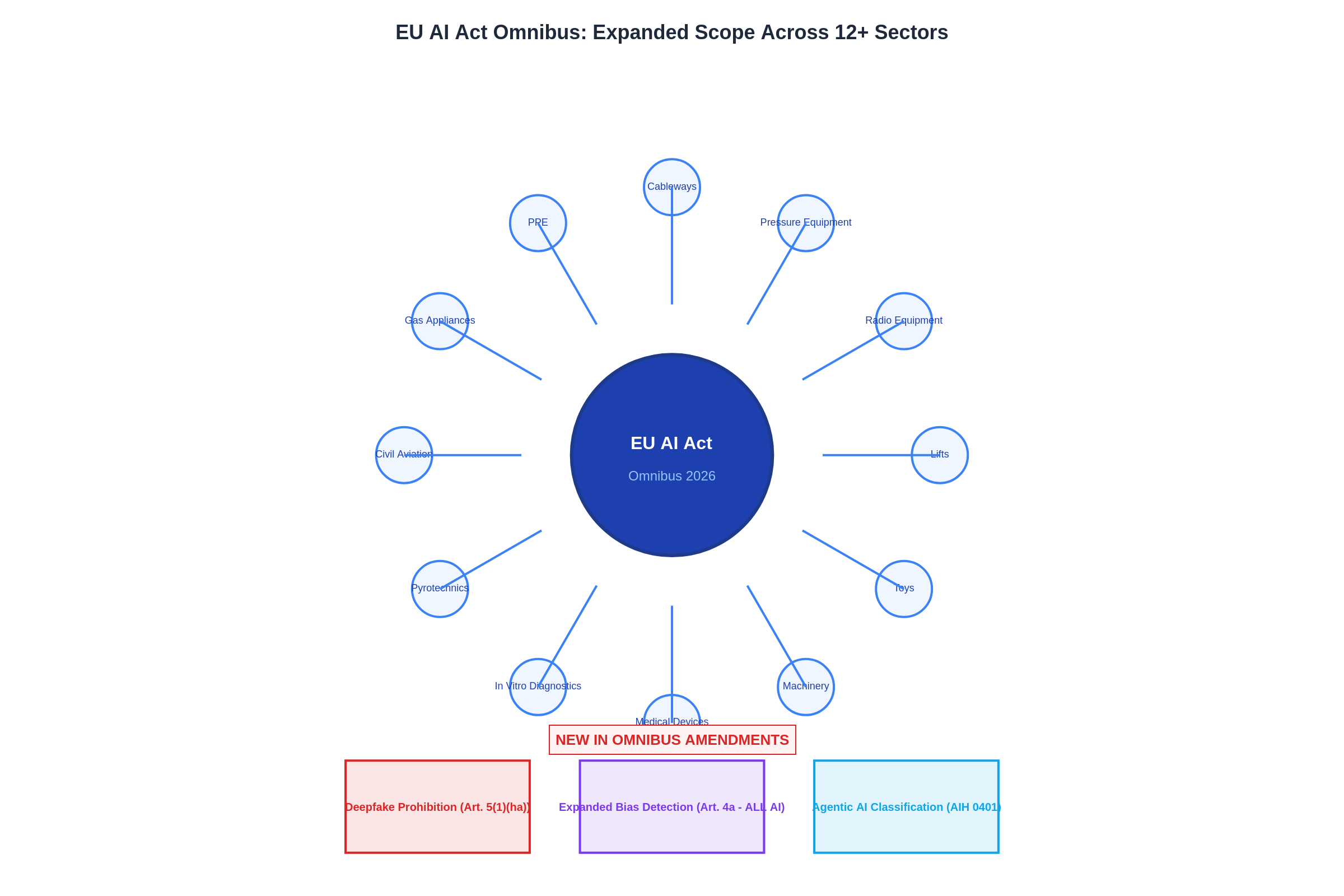

12+ Sectoral Directives

AI governance requirements integrated into medical devices, machinery, toys, lifts, radio equipment, aviation, and more via Arts. 110a–110l.

Deepfake Nudification Banned

Art. 5(1)(ha) prohibits non-consensual AI-generated intimate imagery — the first such ban in any comprehensive AI framework.

Expanded to All AI

Art. 4a extends legal basis for processing sensitive data for bias detection to all AI providers and deployers, not just high-risk systems.

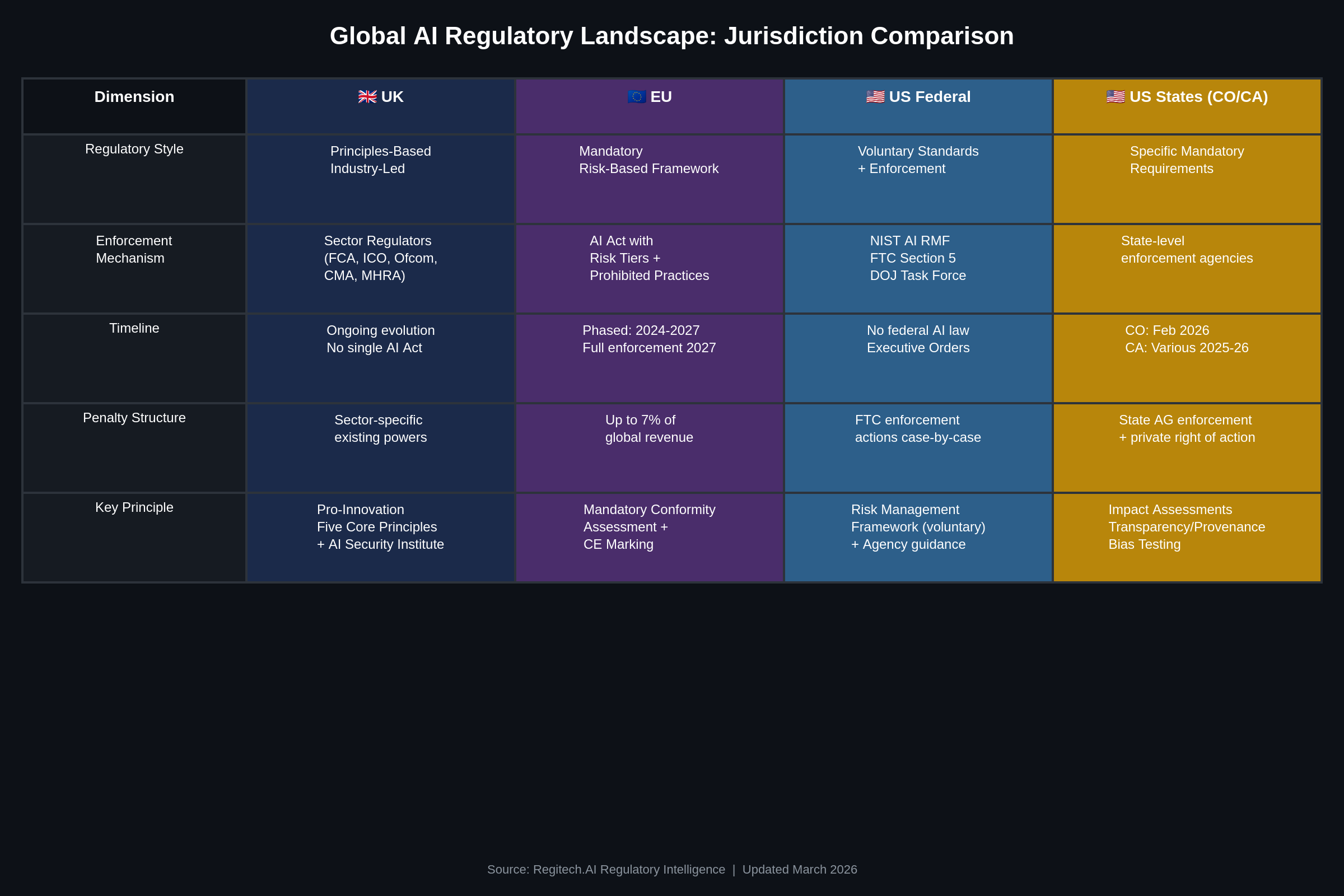

UK Pro-Innovation Framework: Principles-Based, Industry-Led

The UK has charted a distinct regulatory path — no single AI Act, but five cross-sectoral principles applied by existing sector regulators (FCA, ICO, Ofcom, CMA, MHRA). The March 2026 Copyright & AI Impact Assessment signals the next phase of this evolving approach.

Five Core Principles

Safety, transparency, fairness, accountability, and contestability — applied by existing regulators within their domains, not through a single prescriptive law.

Copyright & AI Assessment

Data (Use and Access) Act 2025 mandated an impact assessment evaluating four policy options for AI training data and creative industry protection.

3rd Largest AI Market

Largest AI sector in Europe, 79% CAGR 2022–2024, with £100B+ private investment since mid-2024 and 38/50 AI Action Plan commitments met.

Statutory AI Bill

Government signals potential binding requirements for frontier AI models, with the AI Security Institute positioned to become a statutory body.

Federal AI Policy Framework: What Businesses Need to Know

Between December 2025 and March 2026, the federal government issued three coordinated instruments reshaping AI governance. The Administration’s unified national approach creates new dynamics for businesses operating across jurisdictions.

DOJ AI Litigation Task Force

Established to pursue a unified national framework by addressing state laws that may create barriers to interstate commerce.

Commerce Dept. Evaluation

Comprehensive review of state AI laws, identifying approaches that align with or diverge from federal policy objectives.

FTC Policy Statement on AI

Confirms existing consumer protection law under Section 5 applies to AI, with enforcement priorities including algorithmic fairness and transparency.

The Updated Enforcement Timeline: Phased, Not Delayed

The EU Omnibus Amendments extend high-risk deadlines by 16–24 months, but general provisions, state enforcement, and federal oversight remain on the original schedule. Every quarter from now through 2028 brings a new enforcement milestone.

Federal Direction

State Enforcement

EU (Amended)

Expanded Scope: AI Governance Across 12+ Sectors

The Omnibus Amendments integrate AI governance requirements into existing EU product safety directives through Articles 110a–110l. Companies whose AI systems are embedded in physical products now face harmonized compliance obligations across the full product lifecycle.

New Prohibited Practice

Art. 5(1)(ha) bans AI-generated non-consensual intimate imagery (“nudification”). No equivalent exists in US federal or state law. Exception for providers with effective continuous safety measures.

Bias Detection for All AI

Art. 4a extends the legal basis for processing sensitive data (race, health, etc.) for bias correction to all AI providers and deployers — not just high-risk systems. Subject to strict safeguards including pseudonymization and access controls.

Agentic AI Classification

Annex XIV introduces AIH 0401 — the world’s first regulatory classification code for autonomous AI agents. This signals EU regulatory infrastructure is preparing for next-generation AI governance.

Five Governance Frameworks, One Business Reality

Companies operating across jurisdictions face distinct governance requirements from the EU, UK, US states, and the federal government simultaneously. Each framework reflects a different regulatory philosophy; effective governance infrastructure satisfies all of them without internal inconsistency.

The Business Certainty Challenge

The federal government’s unified national approach aims to reduce regulatory fragmentation — a goal that benefits businesses. At the same time, state enforcement dates remain active, the EU AI Act’s general provisions deadline of August 2026 applies to any company serving European markets, and the UK’s principles-based approach creates a fifth compliance dimension for companies operating in the world’s third-largest AI market.

Federal Enforcement Confirms the Need for AI Governance

The FTC’s March 2026 Policy Statement makes clear: existing federal law already requires AI accountability. Companies need governance infrastructure not because states mandate it, but because the federal government itself recognizes these risks.

FTC Section 5 Enforcement Priorities

What This Means for Businesses

AI governance is a federal priority, not just a state-level requirement. The FTC’s enforcement agenda validates the need for accountability infrastructure.

Companies with documented AI governance records are better positioned for FTC reviews, state enforcement actions, and EU conformity assessments.

The NIST AI Risk Management Framework — the Administration’s preferred voluntary standard — provides a roadmap that aligns with federal objectives.

Jurisdiction-neutral governance records serve as business insurance that holds up under any regulatory framework — federal, state, or international.

Proportionality: Where EU, UK, and US Approaches Converge

Despite philosophical differences, the EU amendments, UK pro-innovation framework, and US federal policy share a commitment to proportionate regulation that does not disproportionately burden smaller enterprises or stifle innovation.

EU Omnibus: SME Relief

US Federal: Innovation-First Approach

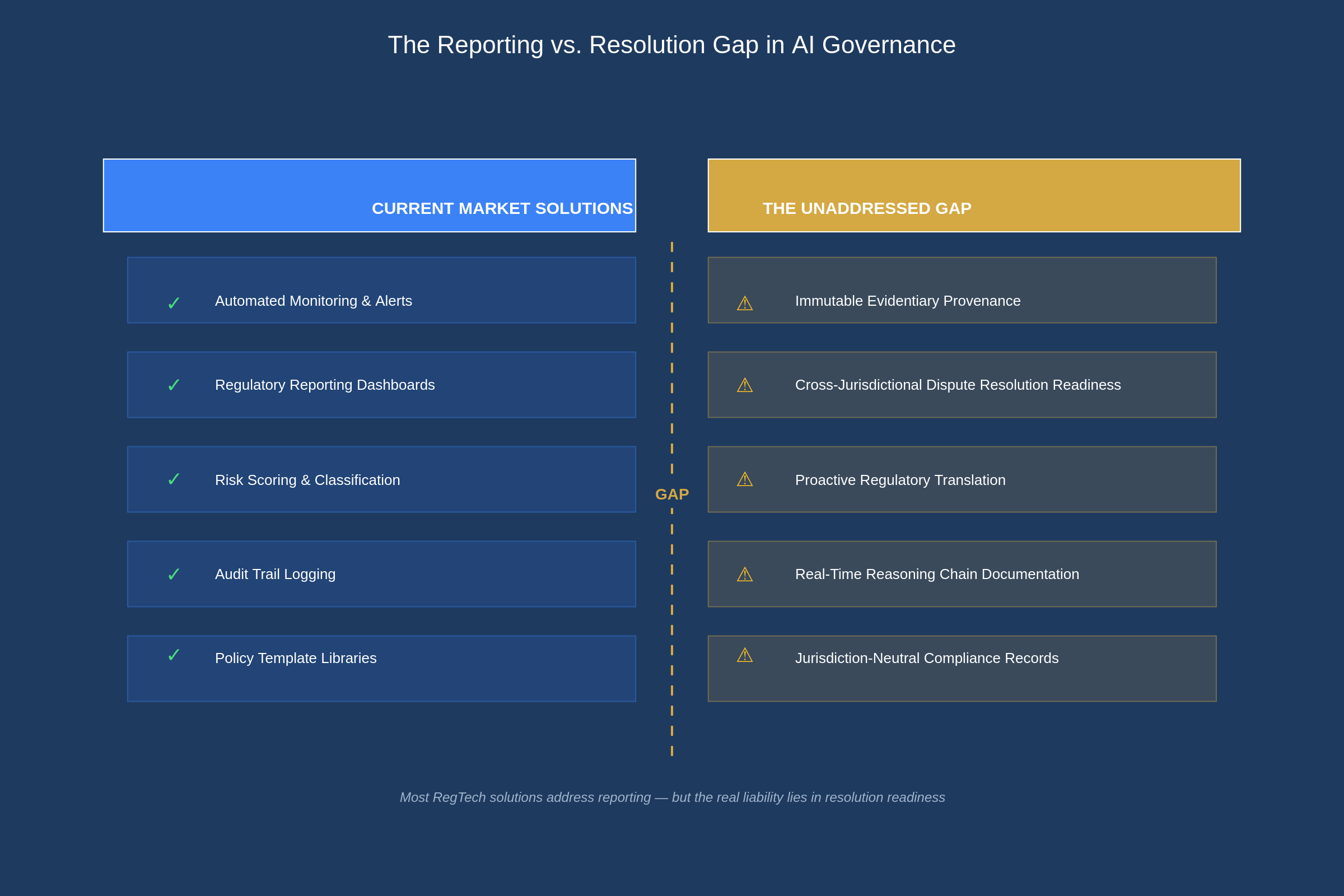

The Market Gap: Monitoring Alone Is Not Enough

Current compliance solutions address what happens before a problem is identified. What businesses also need is infrastructure for what happens after — defensible evidence, cross-jurisdictional resolution, and proactive regulatory translation.

What the Market Currently Provides

What Businesses Actually Need

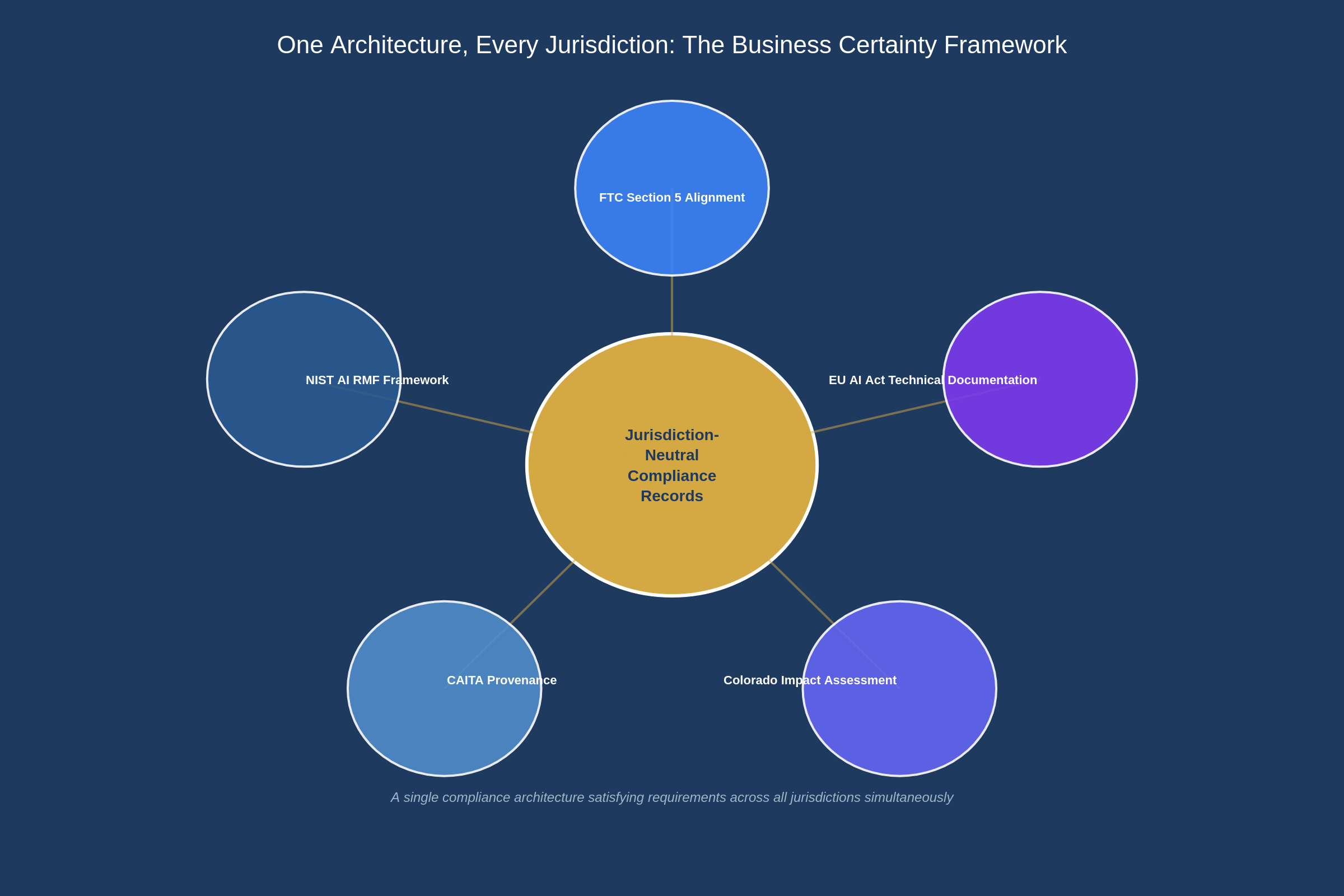

One Architecture, Every Jurisdiction

Rather than maintaining separate compliance programs for each jurisdiction, forward-thinking companies are investing in jurisdiction-neutral governance infrastructure that produces defensible evidence of responsible AI practice regardless of which regulatory framework prevails.

Aligned with the NIST AI Risk Management Framework

The Administration’s preferred approach to AI governance is voluntary, industry-driven, and standards-based — embodied in the NIST AI Risk Management Framework. Effective governance infrastructure maps directly to NIST’s four core functions:

GOVERN

Immutable governance records and accountability structures

MAP

AI decision pathway tracing and risk identification

MEASURE

Real-time algorithmic fairness monitoring and bias testing

MANAGE

Pre-structured resolution mechanisms for identified risks

AI Governance Isn’t a Regulatory Burden — It’s Business Insurance

Whether regulation comes from federal enforcement, state mandates, or international requirements, companies with defensible AI governance records are better positioned.

Companies That Invest Now

Companies That Wait

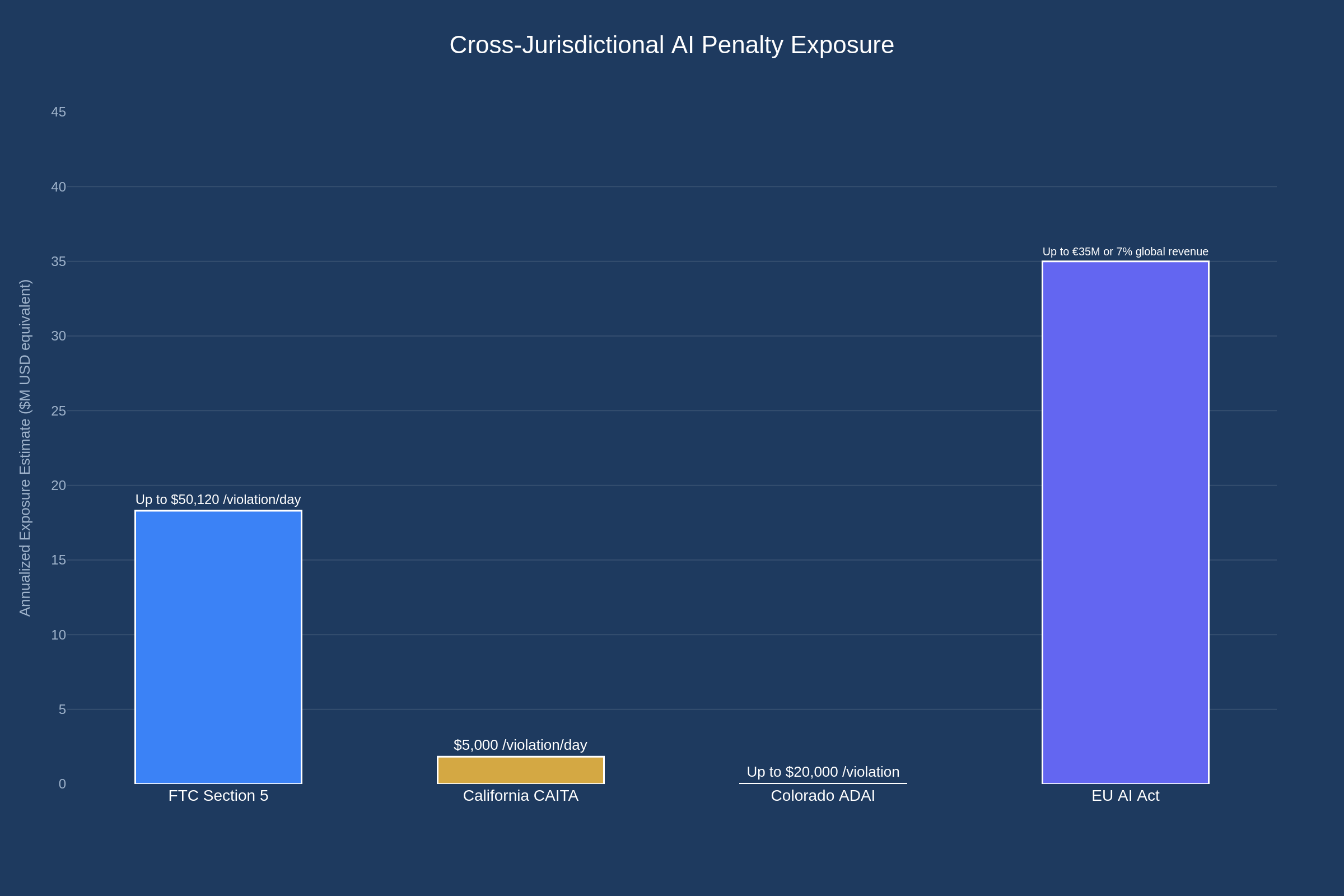

Cross-Jurisdictional Penalty Exposure

For companies operating across multiple jurisdictions, penalty exposure is cumulative. A single AI governance failure can trigger enforcement actions under federal, state, and international frameworks simultaneously.

Five jurisdictions, five philosophies, one deadline pressure. Whether it’s the EU’s mandatory framework, the UK’s principles-based approach, or US state enforcement, the companies that build governance infrastructure now will define the standard that laggards must eventually meet.